Sign up to receive latest insights & updates in technology, AI & data analytics, data science, & innovations from Polestar Analytics.

Most enterprise pricing decisions still follow the same pattern they did fifteen years ago. Analysts pull data from dashboards, export it into spreadsheets, model scenarios manually, and circulate recommendations for sign-off. By the time a price change reaches a regional distribution channel, the market has already moved.

This isn't a data problem. Organizations have more data than they've ever had. It's an architecture problem — and it's increasingly expensive.

71% of CPG leaders said they adopted AI in at least one business function, up from 42% in 2023 — yet no CPG player has truly scaled its AI capabilities, and most leaders still lack clarity on where in the value chain the real value is concentrated.

66% of CPG companies growing both revenue and profit had invested in RGM systems, compared to only 49% of companies that weren't growing both. Meanwhile, 40% of enterprise applications will be integrated with task-specific AI agents by end of 2026 — up from less than 5% today — with agentic AI potentially driving 30% of enterprise application software revenue by 2035, surpassing $450 billion.

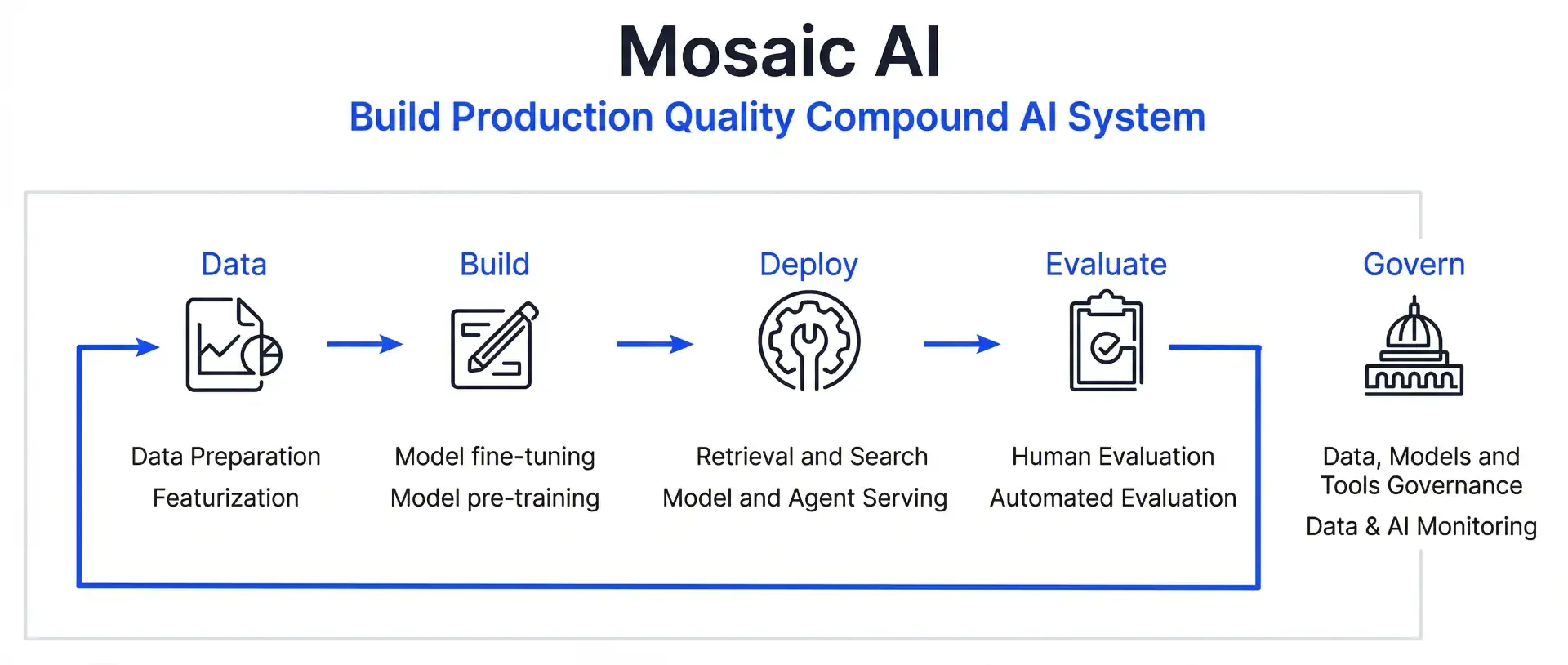

The window for deliberate, structured deployment is now. Databricks Mosaic AI provides the infrastructure to build it properly.

The first instinct of most engineering teams building AI-powered pricing tools is to route everything through a single large language model. It fails quickly and for a predictable reason: revenue management requires contradictory analytical modes — inventory logic, statistical modelling, and unstructured competitor intelligence — running in parallel. Feeding all of that into a single prompt context produces hallucinated constraints and malformed outputs that break downstream API connections.

The correct architecture is a multi-agent supervisor system built on the Mosaic AI Agent Framework. This is not a theoretical preference — it's what production deployments require.

From rules-based pricing to autonomous agents: trace how RGM got here and where it's going.

The Databricks Mosaic AI architecture enables compound AI systems: multiple specialized components chained together, each optimized for a specific reasoning task, coordinated by a supervisor that synthesizes their outputs into a concrete business action.

The Mosaic AI Agent Framework enables Databricks AI workflows where tasks are decomposed, routed to the right specialist, and reassembled into a governed output — all within a single auditable execution trace. In an Revenue growth management context, this means a single pricing query — "Why did Northeast margins drop and what should we do?" — is automatically broken into supply chain, competitive, and elasticity sub-tasks, each answered by a specialist agent with access to the right data, then synthesized into a single actionable pricing recommendation.

A production RGM agent architecture built on this pattern would look like this:

The supervisor synthesizes all three outputs and produces a concrete trade pricing or promotional recommendation — or, in a human-in-the-loop design, writes a proposed action to a governed Unity Catalog staging table for revenue or category manager approval. Each execution trace is logged in MLflow, making the decision path fully auditable.

| Agent | Role | RGM Data Source |

|---|---|---|

| Supervisor Agent | Receives the trade or pricing query, routes sub-tasks, synthesizes final output | Natural language input + execution history |

| Inventory Sub-Agent | Checks stock positions, supply constraints, and replenishment timelines via Unity Catalog SQL | Structured inventory and supply chain tables |

| Competitor Sub-Agent | Scans indexed competitor shelf prices, promotional activity, and channel pricing via Vector Search | Historical and real-time competitive pricing data |

| Elasticity Sub-Agent | Calculates price sensitivity by SKU, channel, and region to determine optimal price points | Historical sales, volume, and promotional response data |

The supervisor synthesizes all three outputs and produces a concrete trade pricing or promotional recommendation — or, in a human-in-the-loop design, writes a proposed action to a governed Unity Catalog staging table for revenue or category manager approval. Each execution trace is logged in MLflow, making the decision path fully auditable.

This architecture matters for RGM governance as much as it matters for accuracy. A category manager or revenue director who can trace exactly why an agent recommended a promotional price reduction — and see the inventory overhang, the competitive index movement, and the elasticity band that drove it — is one who will trust the system enough to act on it rather than override it.

The operational shift that Databricks Mosaic AI enables in RGM is not incremental. It is structural. Here is what changes across the core dimensions of a typical CPG revenue operation:

It is greatest value concentrated in consumer insights and customer and channel management, precisely the domains an agentic RGM system operates in.Do you know?

In an analysis of more than 140 digital and AI use cases across the CPG value chain estimates that gen AI use cases could unlock an additional $160 billion to $270 billion annually in EBITDA for CPG companies globally.

Explore the broader data platform strategy that makes these deployments possible in the Polestar Analytics Data Migration and Modernization eBook, or see how data modernization sets the foundation for agentic systems.

The Mosaic AI Agent Framework is explicitly built for enterprise environments where autonomous execution must be paired with human oversight — and in RGM, this is exactly the right design. Autonomous pricing without governance is not a capability; it is a liability.

Databricks Mosaic AI makes the human-in-the-loop pattern a first-class architectural feature, not an afterthought. Rather than the agent triggering an external API to execute price or promotional changes autonomously, the Supervisor Agent is configured to write proposed pricing actions into a governed Unity Catalog staging table.

This interface gives revenue managers, category directors, or trade marketing leads a structured view of the agent's full reasoning trace — which sub-agents ran, what signals each detected, what the elasticity calculation returned, and what promotional scenario was modelled — before any recommendation moves into execution.

This design reflects a core Databricks Mosaic AI feature: the ability to insert governance checkpoints at any stage of the agent workflow without breaking the automation chain. The agent handles the continuous monitoring, the data synthesis, and the recommendation generation. The human handles the final call — informed by a complete audit trail they didn't have to build themselves.

For CPG industry, this means a category manager reviewing a recommended promotional price reduction for a slow-moving SKU in the Southeast sees not just the number, but the inventory days-on-hand that triggered it, the competitor shelf price that contextualised it, and the elasticity model that validated it. That is a fundamentally different decision environment than a spreadsheet and a gut feeling.

Do you know?

76% of enterprises now include human-in-the-loop processes in their AI deployments.

In RGM, this isn't a reluctant concession to organizational caution — it is sound commercial practice. Pricing decisions carry margin risk, retailer relationship implications, and competitive signalling consequences that benefit from human judgment at the point of execution. The Mosaic AI Agent Framework is built to support exactly this balance: maximum automation in data processing and recommendation generation, with structured human authority at the decision gate.

One further Databricks Mosaic AI architecture advantage worth naming: the same staged governance design that protects against autonomous pricing errors also surfaces latency realities early. High-volume regional pricing queries processed as nightly batch inference — evaluating thousands of SKUs while the trade team sleeps — are both more operationally appropriate for most CPG workflows and more cost-efficient than synchronous real-time execution. The Mosaic AI Agent Framework supports both patterns; the right choice depends on the cadence of the pricing decision, not the limits of the technology.

Not every RGM workflow needs a Mosaic AI agent. Over 40% of agentic AI projects will be canceled by end of 2027 due to escalating costs, unclear business value, or inadequate risk controls. The discipline is knowing which pricing workflows justify the architecture.

Apply the Mosaic AI Agent Framework when the RGM decision requires synthesizing multiple unstructured and structured data sources simultaneously — competitive shelf data, inventory positions, historical promotional lift, and regional demand signals — in a way that a human analyst cannot sustain continuously at scale. Trade promotion optimisation, dynamic channel pricing, and regional markdown decisions are strong candidates.

Apply simpler Databricks AI workflows — a rules-based Databricks Job, a scheduled Python trigger — when the pricing logic is deterministic and bounded: "reduce price by 5% if inventory exceeds 30 days on shelf." These execute in milliseconds, carry zero hallucination risk, and are cheaper to maintain. The Mosaic AI Agent Framework does not replace rules-based pricing engines. It handles the decisions that rules cannot.

The RAG components of the sub-agents rely on historical data to ground recommendations. When a market shock has no precedent in the Vector index — a commodity price spike, a sudden competitor delisting — the Mosaic AI Agent Framework's human-in-the-loop governance gate becomes its most important safeguard. Rather than generating a confident but ungrounded recommendation, the system is configured to flag the anomaly and route it to a senior revenue manager for manual response. This is not a system failure; it is the system working as designed.

Maintaining production quality requires ongoing ML engineering investment — monitoring MLflow for prompt drift, refreshing Vector Search embeddings as competitive data evolves, and running Mosaic AI Agent Framework evaluation cycles against updated baselines. Teams that build the agent and move on will see performance degrade quietly. Teams that treat the Databricks AI workflow as a living system — requiring the same discipline as any production data pipeline — will see it compound in value over time.

Mosaic AI Agent Evaluation allows stakeholders, even those outside the Databricks Platform, to assess model outputs and provide ratings to help iterate on quality

Such MLflow Tracing provides full observability into every Databricks AI workflow execution step — essential for monitoring prompt drift, debugging Mosaic AI agent performance, and maintaining RGM recommendation quality over time!

The shift from dashboard-driven to agent-driven revenue management is not primarily a technology decision. It's an operating model decision. The technology — Databricks Mosaic AI, Unity Catalog, MLflow, Vector Search — is mature enough to support production deployment today. What determines whether a deployment succeeds is the governance design, the RGM workflow architecture, and the honesty about where automation should stop and category manager judgment should begin.

Polestar Analytics has structured this transition for enterprise clients across retail and CPG, combining the Databricks Mosaic AI architecture with implementation discipline that accounts for organizational friction as deliberately as it accounts for technical design. The question is no longer whether agentic revenue management works. It's whether your trade and pricing infrastructure is ready to support it — and whether your organization is ready to act on what it finds.

Turn Your Revenue Data into Autonomous Action. Polestar Analytics designs and deploys RGM agent architectures on Databricks Mosaic AI — built for production, not just pilots.

Talk to Our RGM Team