Sign up to receive latest insights & updates in technology, AI & data analytics, data science, & innovations from Polestar Analytics.

| With AI spend hitting record highs in 2026, enterprises face a tougher question: how to govern, prioritize, and convert investment into returns. |

Editor’s note: How does an industry spend $2.5 trillion on AI in a single year and still leave 75% of its leaders without meaningful returns to show for it?

That is not a failure of ambition. Boards are committed. Budgets reflect it. AI spending in 2026 is not a discretionary line item — for most enterprises above $500M, it now represents roughly 5% of total revenue. The gap between investment and return is a governance failure. And it is getting more expensive every quarter it goes unaddressed.

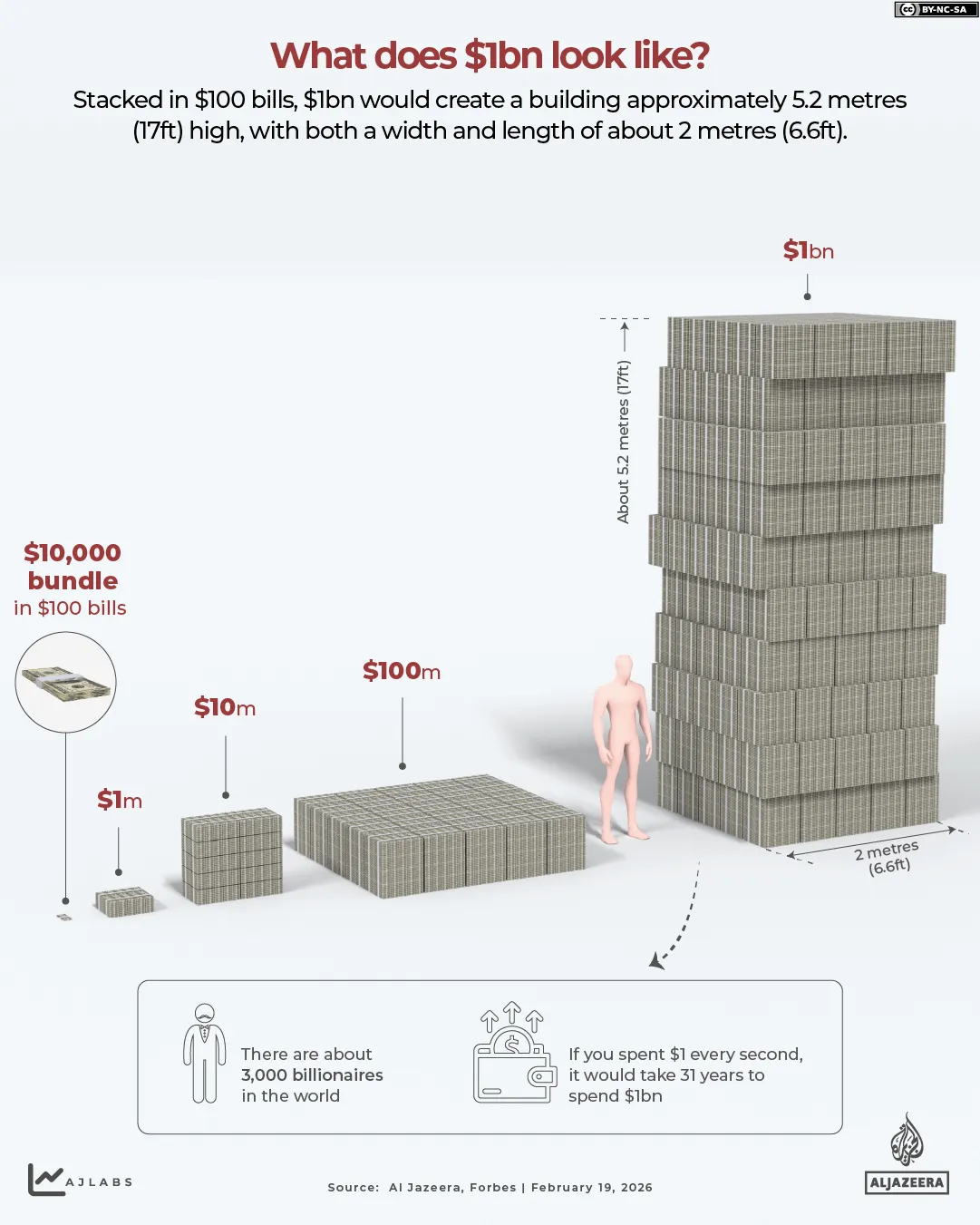

Worldwide AI spending is forecast to reach $2.5 trillion in 2026 — a 44% increase over 2025. The bulk is flowing into AI infrastructure at $1.37 trillion, with $589 billion into services and $452 billion into software. To appreciate what that number actually means: total global corporate AI investment between 2013 and 2024 reached $1.6 trillion — surpassing the combined inflation-adjusted cost of the Manhattan Project, the Apollo Program, and the entire US Interstate Highway System. We will spend more than that in 2026 alone.

What makes this moment distinct is not the scale of capital. It is the speed at which it has moved without a corresponding movement in returns.

BCG surveyed 1,803 senior executives across 19 markets and found a paradox that should make every AI budget owner pause: 75% of leaders rank AI as a top-three strategic priority. Only one in four reports meaningful returns.

When returns don't show up, the instinct is to look at the stack. Wrong model. Messy data. But companies generating strong AI returns are running the same models on similar data as everyone else. The companies generating strong AI returns are not running more sophisticated models than the rest. They are running better-governed programs. The gap between the 75% and the 25% is a governance gap — and it is quietly widening while the industry debates infrastructure.

The Gartner figures tell you where dollars are landing. BCG's IT Spending Pulse tells you something more important: where they are coming from.

Enterprises are not simply adding AI spend — they are actively defunding legacy infrastructure, traditional systems management, and older hardware to free the capital. AI and GenAI, along with enabling technologies like cloud services and security, are seeing the largest spending increases. The sharpest decreases are in established areas: systems and services management, devices, and server infrastructure.

For companies above $500 million in revenue, AI now represents approximately 5% of total revenue — not 5% of the IT budget. Total revenue. That single reallocation reframes the entire governance question. AI is no longer competing for discretionary technology spend. It has displaced other strategic priorities entirely, which means the cost of a poorly governed AI program is no longer a wasted IT initiative. It is a wasted strategic cycle. In markets moving this fast, that gap compounds.

There is a second dynamic worth naming. Companies are reducing their vendor rolls almost everywhere — except AI and GenAI, and to a lesser extent analytics. The rationale is defensible in the short term: an open vendor landscape lets organizations experiment before committing. But as a multi-year posture, AI vendor fragmentation compounds hidden cost and governance complexity in ways that are expensive to unwind. The organizations building durable AI advantage in 2027 are the ones making consolidation decisions now, not indefinitely deferring them.

When AI programs fail to produce returns, the failure is almost never at the model layer. It happens two floors below — in how the program is structured, measured, and owned. Three patterns account for most of what drives that gap.

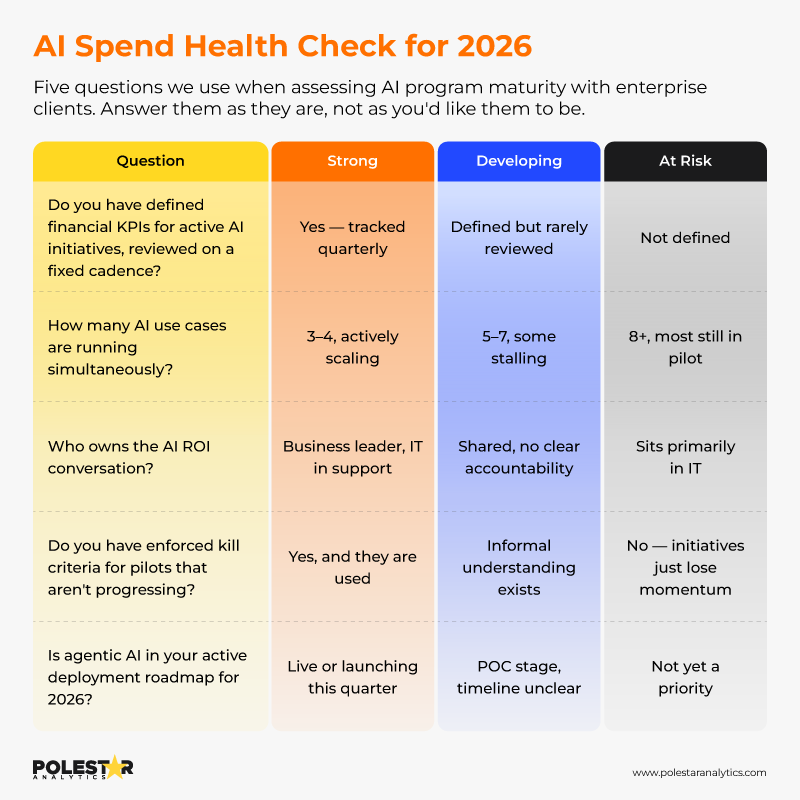

BCG's data shows the average enterprise runs 6.1 AI use cases simultaneously. Companies generating the strongest returns run 3.5 — and produce 2.1x greater ROI as a result.

Twelve pilots running at once looks like a robust AI program. What it actually represents is twelve hedged bets, none of which has received the resource concentration required to reach production at scale. Nobody has made a conviction call. Nobody is fully accountable for outcomes. Every quarterly review surfaces "promising early results" that never convert into business impact. The organizations winning on AI are not the ones with the longest initiative list. They are the ones with the discipline to cut it down to the three or four bets that actually matter — and then resource those properly.

Nearly 60% of organizations define no financial KPIs for their AI investments. A third track nothing at all. Adoption rates and model accuracy scores are build metrics — they confirm the system exists, not what it is worth. When your CFO asks what this quarter's AI spend returned, "the model is live and usage is up" is not an answer. It is a description.

The absence of financial measurement is not neutral. It makes every AI program unfalsifiable — it can never truly succeed or fail, it can only persist until the budget cycle forces a conversation nobody is prepared to have.

When AI strategy lives entirely within IT, it naturally optimizes for what IT controls: architecture decisions, vendor selection, technical performance. The business functions that were supposed to extract value end up downstream of every decision, with no stake in outcomes and no accountability for returns. This is where ROI quietly disappears — not in the technology, but in the structural gap between the team that builds and the team that benefits.

Effective AI governance in 2026 is not about adding process. It is about placing the right decisions in the right hands, at the right cadence — and building the organizational muscle to exit what is not working before it drains the program of credibility.

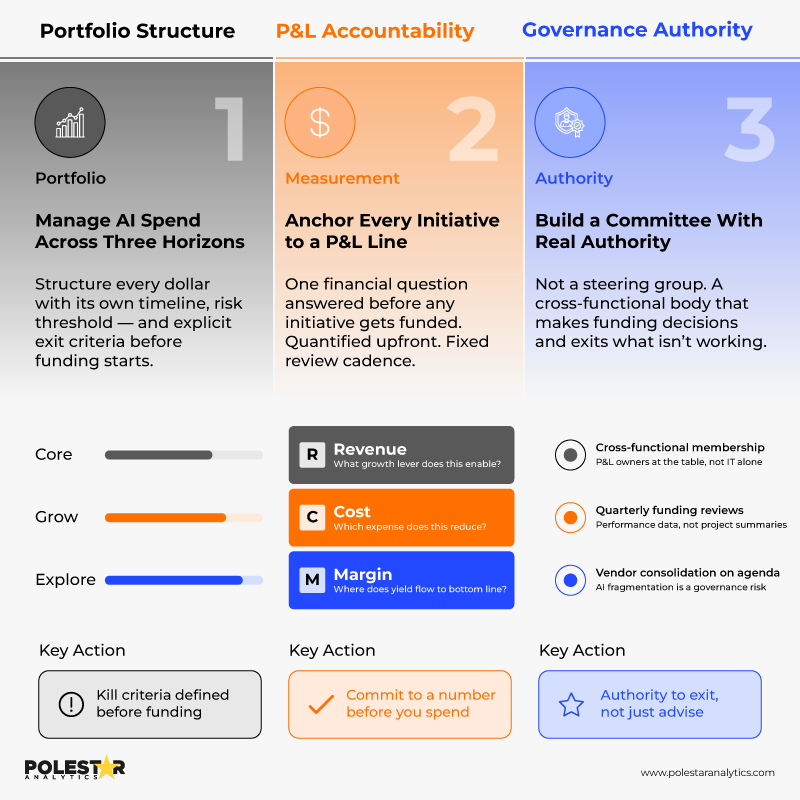

Structure investment across three horizons:

Each horizon carries different risk thresholds and different success benchmarks. More critically, each requires defined exit criteria — the specific conditions under which a pilot gets killed rather than indefinitely extended.

There is a meaningful difference between a portfolio and a list. A portfolio has allocation logic, performance thresholds, and active rebalancing. A list just grows. Organizations that cannot kill underperforming initiatives cannot govern a portfolio — and they signal to the business that accountability does not actually apply to AI.

Before any initiative gets funded, it should answer one of three questions:

One of the three. Quantified upfront. Reviewed on a fixed cadence.

BCG found that AI leaders establish clear financial targets before deployment, not after. Committing to a directional financial thesis before spending is the single discipline that most consistently separates programs that earn continued investment from ones that quietly drain budget until someone finally asks the hard question. The commitment does not need to be precise — it needs to exist.

This is not a steering group. Not a monthly status update. The structure that works looks like this:

The organizations building durable AI programs are not the ones with the most sophisticated governance frameworks on paper. They are the ones with the organizational will to act on what the data shows — including when that means stopping something.

What your results tell you:

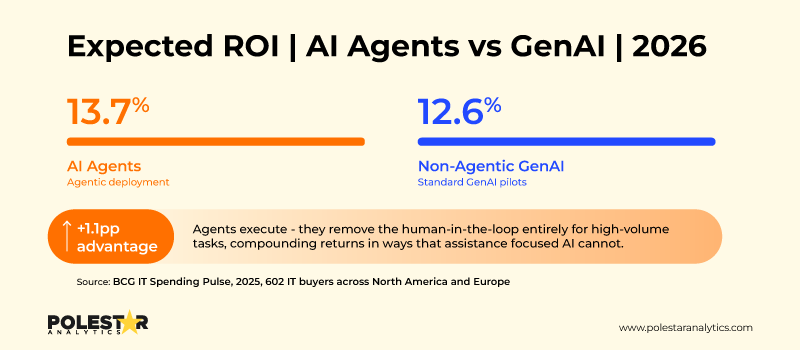

One number worth paying attention to in 2026: 58% of enterprises are already deploying AI agents. Another 35% are actively evaluating. Only 7% remain on the sidelines — and that group is shrinking fast.

The reason is economic. Enterprises report expected ROI of 13.7% from AI agents versus 12.6% from non-agentic GenAI. The difference is not marginal — it reflects what agents actually do differently.

They execute. Supply chain exception handling, financial close automation, sales intelligence, compliance monitoring — in high-volume, process-intensive functions, agents are outperforming because they remove the human-in-the-loop bottleneck, not just augment it.

Our view: when prioritizing AI investments for 2026, agentic deployment in process-intensive functions should rank above additional GenAI pilots in the same domain. The ROI case is stronger. The change management is often simpler — agents work within existing workflows rather than requiring them to change. And the compounding effect of getting agents into production earlier is significant.

Every governance framework eventually hits the same wall: the infrastructure beneath it wasn't built to support it.

You cannot measure AI ROI without governed data pipelines. You cannot run a meaningful portfolio review without real-time visibility into what is live, what it costs, and what it is producing. And you cannot scale what was built in a siloed pilot environment. The board-level governance conversation only holds when the operational layer beneath it actually functions.

This is the gap most enterprises don't see until it's too late — and it's the gap 1Platform was built to close.

1Platform is Polestar Analytics' converged data and AI ecosystem — purpose-built to unify data management, GenAI, and agentic AI into a single governed environment. Rather than patching together separate tools for pipelines, dashboards, and AI models, 1Platform brings them into one execution layer — so the decisions that matter at the board level are grounded in infrastructure that actually works at the operational level.

It does three things that most enterprises are currently doing across three disconnected systems:

More than 80% of AI pilots fail to scale — not because the models underperform, but because they are built on fragmented data estates disconnected from business workflows. 1Platform is the infrastructure answer to that problem: governed pipelines, unified execution, and measurement at the same layer as the work itself.

The enterprises closing the AI ROI gap are not doing it with better governance documents. They are doing it with better infrastructure underneath them.

The difference is not the technology. It is the infrastructure built around it.