Sign up to receive latest insights & updates in technology, AI & data analytics, data science, & innovations from Polestar Analytics.

AI adoption inside GCCs is accelerating faster than governance readiness. Around 92% of GCCs in India are actively piloting or scaling AI initiatives, yet more than 70% lack mature frameworks to measure ROI, risk, or governance controls around these deployments.

The role of GCCs in enterprise AI transformation has therefore become far more consequential. What began as a cost-arbitrage model has evolved into a mandate to build and scale AI capabilities for global enterprises — and with that shift comes a level of data and regulatory exposure most GCC operating models were not originally designed to manage.

A single capability center may simultaneously process personal data governed by GDPR, CCPA, and India’s DPDP Act, 2023 — each with different consent requirements, breach notification timelines, and cross-border data transfer rules that do not naturally align.

Building a GCC framework for AI and data governance is no longer optional. Running AI workloads across jurisdictions without an architecture designed for these regulatory realities is not a sustainable risk strategy — it is simply exposure waiting to surface.

The global average cost of a data breach reached USD 4.44 million in 2025. The US average hit an all-time high of USD 10.22 million in the same period. For GCCs, the jurisdiction with the strictest enforcement sets the floor — not the global average.

The governance gap between AI adoption and AI oversight is not a perception problem it is a documented structural gap. Despite rapid enterprise adoption of artificial intelligence, governance frameworks are not keeping pace.

While approximately 75% of organizations report using generative AI technologies, only about one-third have implemented responsible AI governance controls across the enterprise.

It indicates a substantive gap between deployment and oversight!

A data governance framework for GCC operations is not a compliance checklist. It is the operational architecture that allows GCC AI to be deployed at scale without creating unacceptable regulatory or reputational exposure. It operates across three connected layers.

Most GCCs operate a flat data architecture. Everything ingested into a central cloud repository to break down silos. When GDPR and the DPDP Act apply simultaneously to that flat architecture, a significant volume of data has typically crossed borders without the legal instruments required to support the transfer.

What needs to change — structurally:

The cost trade-off to plan for: Cloud costs typically rise 15–20% when moving from a centralised to a sovereign multi-region architecture.

Multi-cloud complexity as the leading driver of unplanned cloud cost increases. Budget for this before the migration begins, not when it surfaces mid-project.

Discover how leading Global Capability Centers are implementing AI with strong governance frameworks to drive scalable and responsible innovation.

Explore GCC Services at Polestar AnalyticsGovernance policy documents fail when they rely on human discipline at the moment it is least available — during sprint deadlines, urgent client turnarounds, or debugging sessions. The controls need to be structural, not aspirational.

A. Shadow AI: The Risk Already Inside Your Perimeter

In a GCC context, this means proprietary source code, client financial models, and customer records are being pasted into public LLMs with no data residency controls and no audit trail. Banning tools pushes usage underground. The effective response is making the safe lane easier to use than the unsafe one.

The Secure Gateway architecture:

B. Model Explainability (XAI) — Non-Negotiable for Regulated Verticals

C. Bias Detection — Before Deployment, Not After a Complaint

D. Continuous Model Monitoring (MLOps)

1 in 4 failed AI initiatives traces back to weak governance; more than half of executives report no clear approach to managing AI risk or accountability!

E. Role-Based Access Control (RBAC) on Data

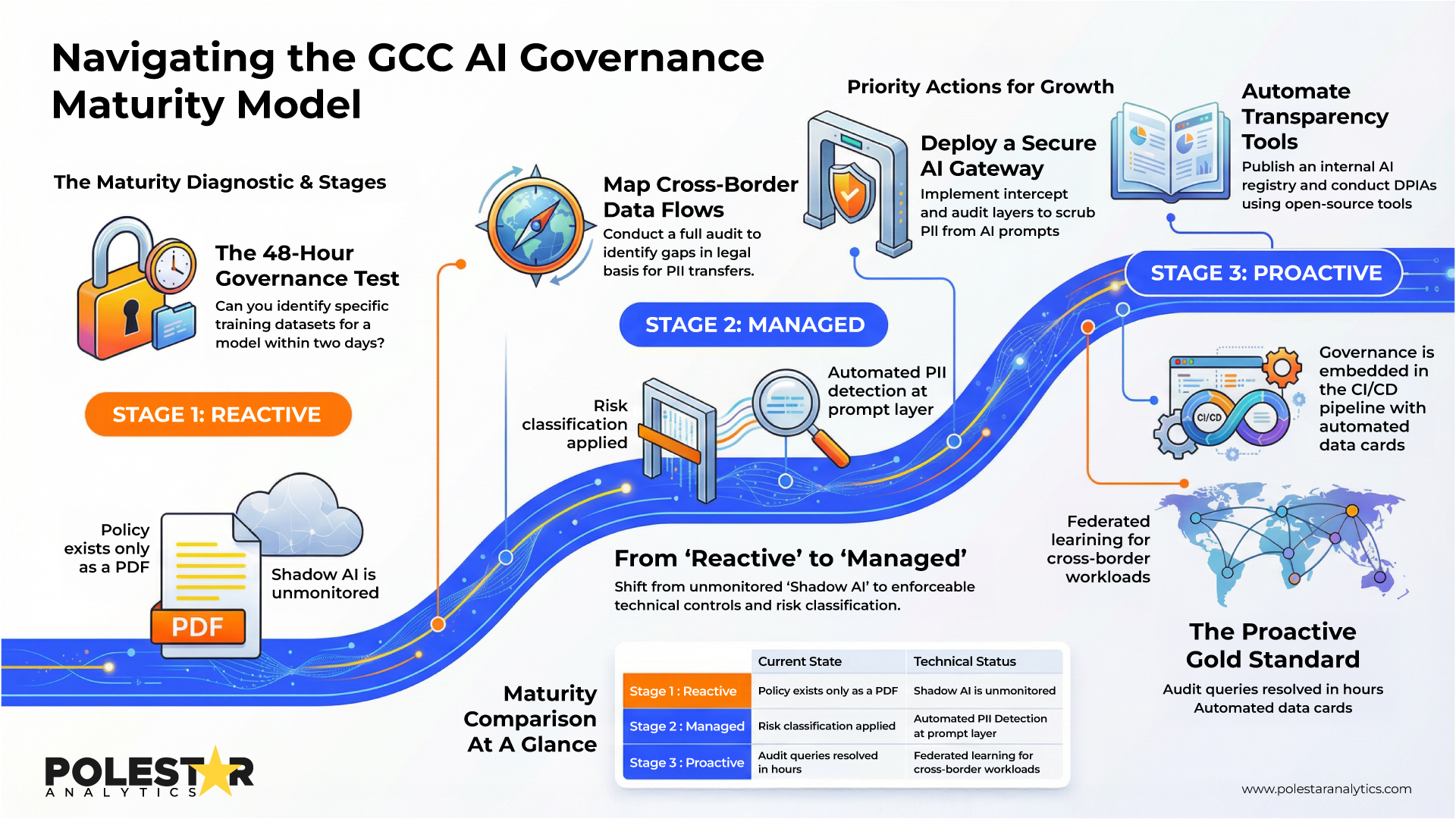

Understanding how to build AI governance in GCC requires mapping where you currently are — not where the policy document says you should be. Most GCCs sit at one of three stages. Knowing which one applies determines what the next quarter should look like.

A practical self-diagnostic first: Can your organisation answer, "Which specific datasets trained your fraud detection model?" within 48 hours? If not — governance is reactive, not managed.

| Stage | What It Looks Like | Priority Actions |

|---|---|---|

| Stage 1 — Reactive |

|

|

| Stage 2 — Managed |

|

|

| Stage 3 — Proactive |

|

|

P.S. Unless there is strong alignment between data management, regulatory obligation mapping, and technical enforcement — governance frameworks will remain policy documents that generate risk instead of managing it.

See how AI is transforming the role of GCCs—from operational support hubs to strategic innovation engines.

Discover How AI Is Reshaping GCCsThe role of GCC in enterprise AI transformation is shifting from execution partner to strategic capability hub. That shift only delivers long-term value if the governance architecture underneath it is built to handle the regulatory, operational, and reputational weight of operating GCC AI at scale.

Governance is not what limits speed. It is the infrastructure that makes speed sustainable. Without it, regulatory risk forces caution at every deployment decision. With it, GCCs can ship high-impact models with confidence.

For organisations evaluating how to move from reactive policy documents to structurally embedded AI governance, independent expertise often accelerates the transition. Polestar Analytics work specifically at the intersection of GCC operating models, AI architecture, and regulatory alignment — helping enterprises design sovereign data architectures, implement AI risk controls, operationalise MLOps governance, and scale AI programmes across jurisdictions without fragmentation.

When governance, data engineering, and AI delivery are aligned by design rather than retrofitted after incidents, GCCs move from being innovation centres in ambition to innovation centres in execution.